In this post, I’ll help you answer this question more precisely. Clearly, the answer for how high should R-squared be is. I also showed how it can be a misleading statistic because a low R-squared isn’t necessarily bad and a high R-squared isn’t necessarily good.

Multiple r squared xlstat how to#

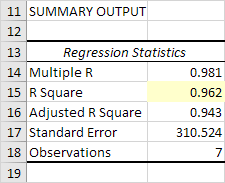

We’ve discussed the way to interpret R-squared and found out the way to detect overfitting and underfitting using R-squared. Previously, I showed how to interpret R-squared (R 2). We’ve practically seen why adjusted R-squared is a more reliable measure of goodness of fit in multiple regression problems. We’ve discussed the math behind R-squared and implemented it in Python. However, a very low R-squared indicates underfitting and adding additional relevant features or using a complex model might help. There is no rule of thumb to confirm the R-squared to be good or bad. In some problems which are hard to model, even an R-squared of 0.5 may be considered a good one. This completely depends on the type of the problem being solved. If the same high R-squared translates to the validation set as well, then we can say that the model is a good fit. If the training set’s R-squared is higher and the R-squared of the validation set is much lower, it indicates overfitting. Below are the two frequent questions asked by beginners regarding R-squared. However, this interpretation may not always hold good. In the above plots, we can see that the models having high adjusted R-squared seem to have a good fit compared to the ones with lower adjusted R-squared. Our prediction for the new house would be $100,000 as we have no other data to help with our prediction. For example, we have prices of 100 houses with mean price of $100,000 and we were asked to predict the price of a new house. the mean of the other house prices as our prediction. In such cases, we have no other option but choosing the most common value i.e. Imagine a world without predictive modeling where we are given a task of predicting the price of a house, given the prices of other houses. Backgroundīefore proceeding with R-squared it is essential to understand a few terms like total variation, explained variation and unexplained variation. We’ll also see why adjusted R-squared is a reliable measure of goodness of fit for multiple regression problems. We’ll implement R-squared and adjusted R-squared in Python. (coef(fm)] * sd(birthwt $lwt) / sd(birthwt$bwt))^2įm0 <- lm(bwt ~ lwt, as.data.In this article, we’ll discuss the math behind R-squared along with a few important concepts like explained variation, unexplained variation and total variation. cor(birthwt $lwt, birthwt$bwt)^2 # squared correlation Thus we have these three additional ways to view R squared (6 in total) in the case of a single predictor. If we standardize the dependent variable and predictor to each have mean 0 and standard deviation 1 then the coefficient of the predictor equals the correlation between the dependent variable and the predictor so its square equals multiple R squared. The coefficient of the predictor times the ratio of the standard deviations of the dependent variable to the predictor equals the correlation so the square of all that equals the multiple R squared. The square of the correlation between the dependent variable and that predictor equals each of the above. In the special case of a single predictor we have additional equalities which we can add to the above list: Var(fitted(fm)) / var(birthwt$bwt) # ratio of variancesĬor(fitted(fm), birthwt$bwt)^2 # squared correlation We can repeat this with the data used in the question which has the special feature that there is only a single predictor: library(MASS) Summary(fm)$r.squared # multiple R squaredġ - deviance(fm) / deviance(fm_null) # improvement in residual sum of squares Var(fitted(fm)) / var(Y) # ratio of variancesĬor(fitted(fm), Y)^2 # squared correlation Multiple R is the multiple correlation coefficient. It is also known as the Pearson productmoment correlation coefficient, PPMCC or PCC, or Pearson's r. X1 <- c(1, 1, 1, 1, 2, 2)įm <- lm(Y ~ X1 + X2) # regression of Y on X1 and X2 This chapter discusses some terms that are used in correlation analysis and linear regression.

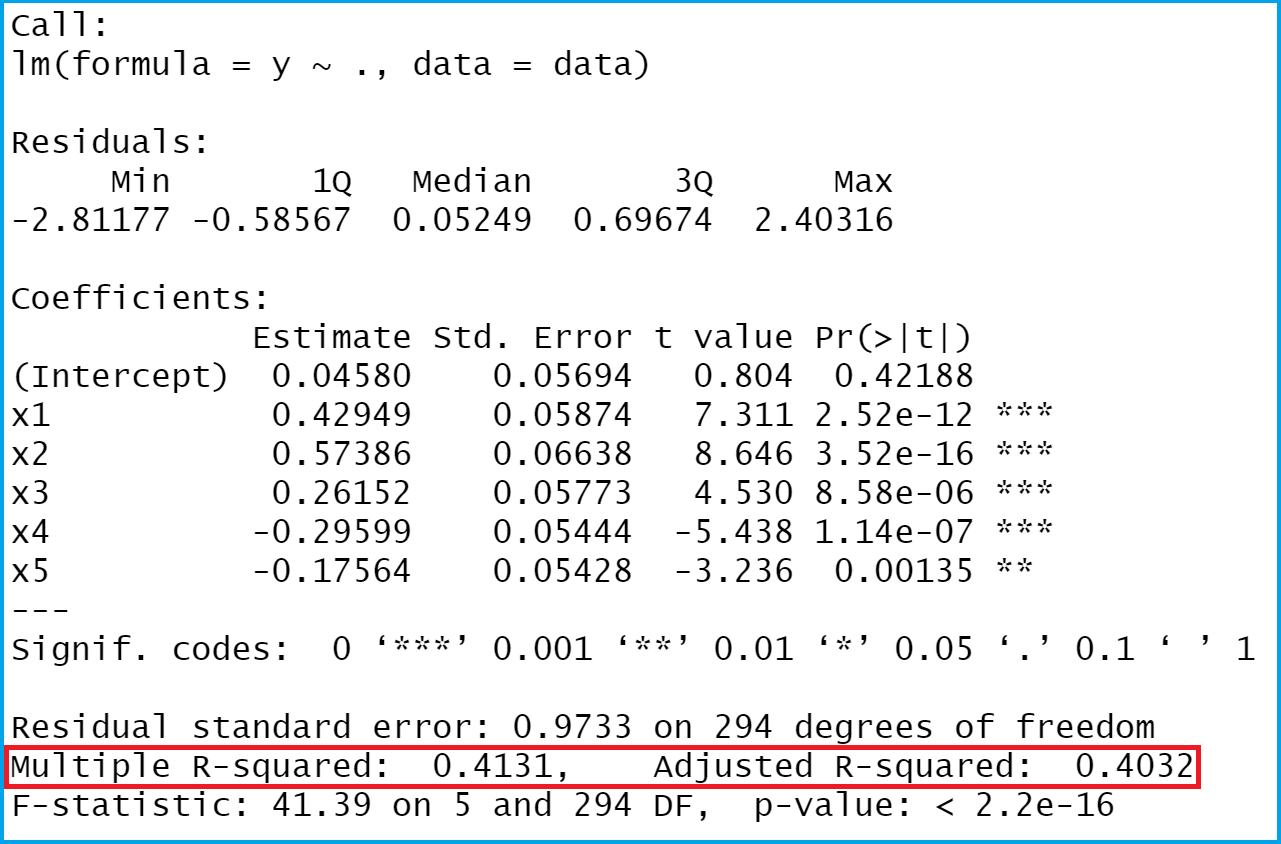

Of the regression equation.) fm_null is the regression object of the null regression model (as described above) and deviance(fm) refers to the residual sum of squares of the indicated model. (The fitted values are the values of Y predicted by the right hand side

Variable, fm is the regression object and fitted(fm) are the fitted values. We show an R example where X1 and X2 are the predictors, Y is the dependent (The null model has the same dependent variable but only an intercept with no predictors.) the proportional improvement in residual sum of squares from the null model to the fitted model.the square of the correlation between the fitted values and the dependent.the regression "explains" that proportion of the variance of the dependent variable) the variance of the fitted values divided by the variance of theĭependent variable (i.e.In general, these four quantities are the same giving three different ways to think about R squared.